~ 2 min read

Creating Latent Representations of Synthesizer Patches using Variational Autoencoders

Publication Details

This paper appeared at the 2023 International Symposium on the Internet of Sounds in Pisa, Italy.

Paper Link: https://ieeexplore.ieee.org/document/10335466

Summary

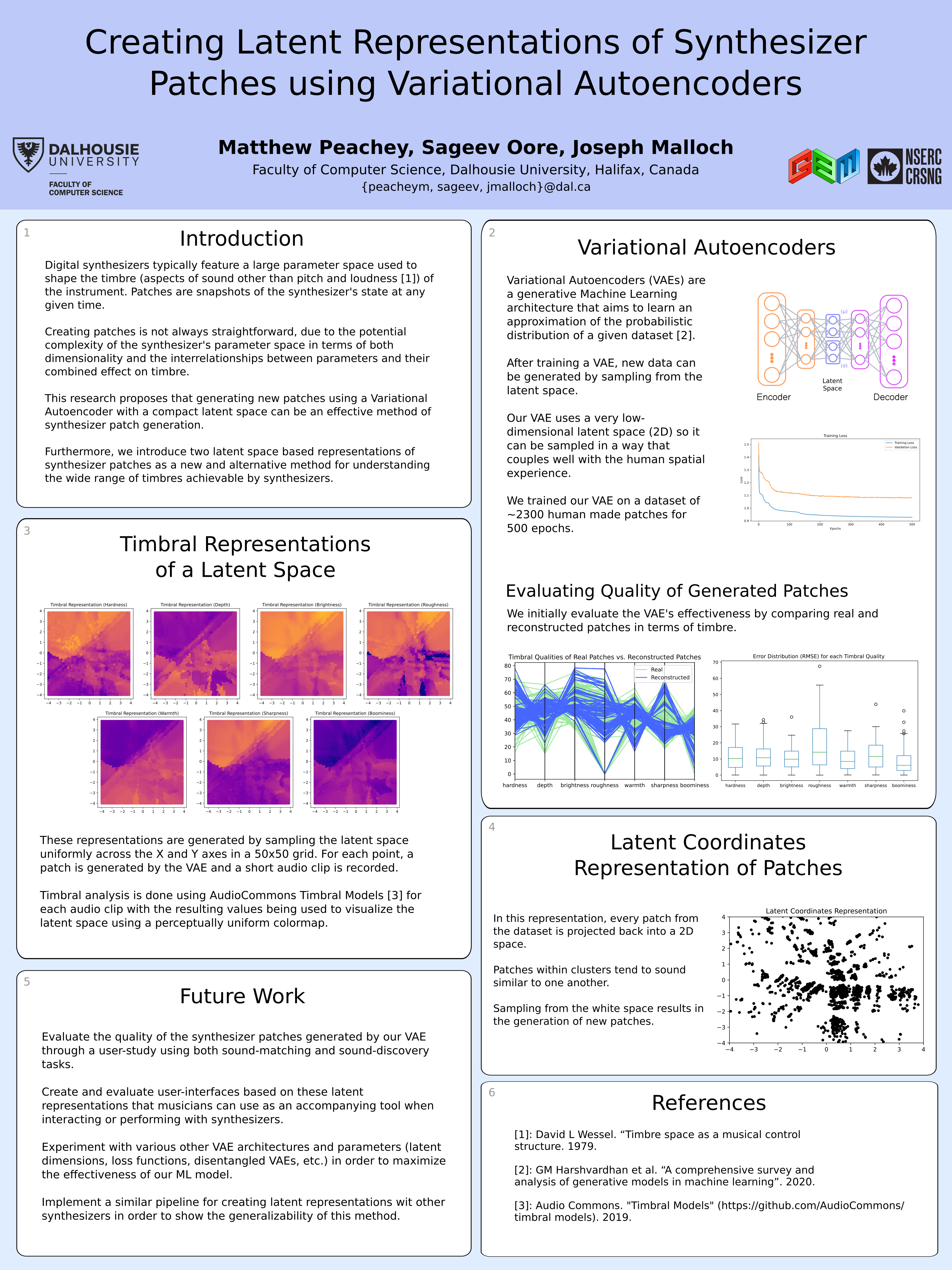

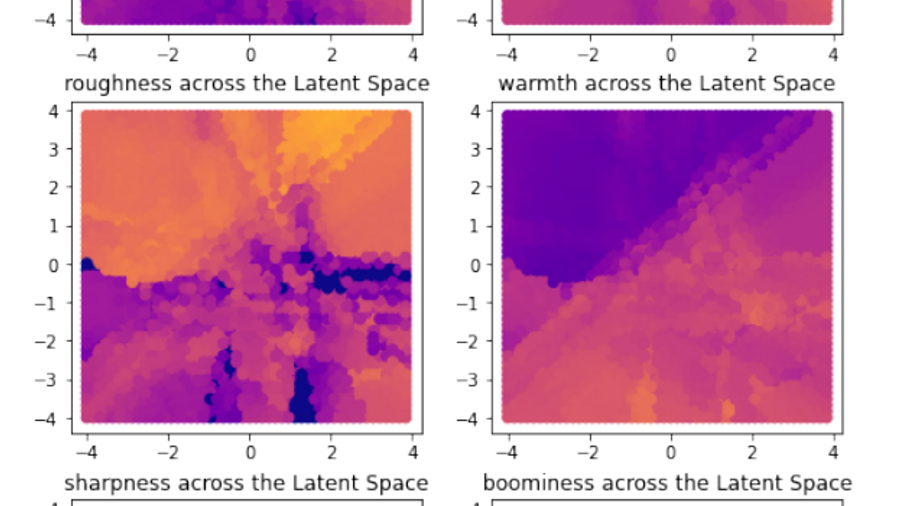

This paper presents both “Timbral Representations” and “Latent Coordinates” of representations for synthesizer patches. We show how we trained a VAE on an existing dataset of synthesizer patches and how we can use the latent space of that generative model to both explore existing patches as well as create new synthesizer patches altogether.

We provide the VAE architecture, training notebook, GUI demo and more on GitHub available through this link.

Authors

Matthew Peachey, Sageev Oore and Joseph Malloch

Abstract

Digital synthesizers typically feature a user-adjustable parameter space (i.e. the set of user-adjustable parameters) that is used to shape the sound (or timbre) of the instrument. A synthesizer patch is a snapshot of the state of the instrument’s parameter space at a given time and is the representation most familiar to synthesizer users. Creating patches can often be repetitive, tedious, and complicated for synthesizers with large parameter spaces. This paper presents the creation and use of latent representations of synthesizer patches generated by training a Variational Autoencoder (VAE) on a library of existing patches. We demonstrate how to generate previously unseen patches by exploring this latent representation via interpolation through the latent space. Using the open-source synthesizer amSynth as a test bed, we evaluate reconstructed patches against a ground truth both, numerically and timbrally, as well as show how generating new patches from the latent space result in diverse yet musically pleasing timbres.

Poster

We presented this poster at the IS2 conference, and appreciate all the great feedback we received!