~ 2 min read

MoveBox: Democratizing MoCap for the Microsoft Rocketbox Avatar Library

Publication Details

This paper appeared at IEEE AI/VR 2020.

Summary

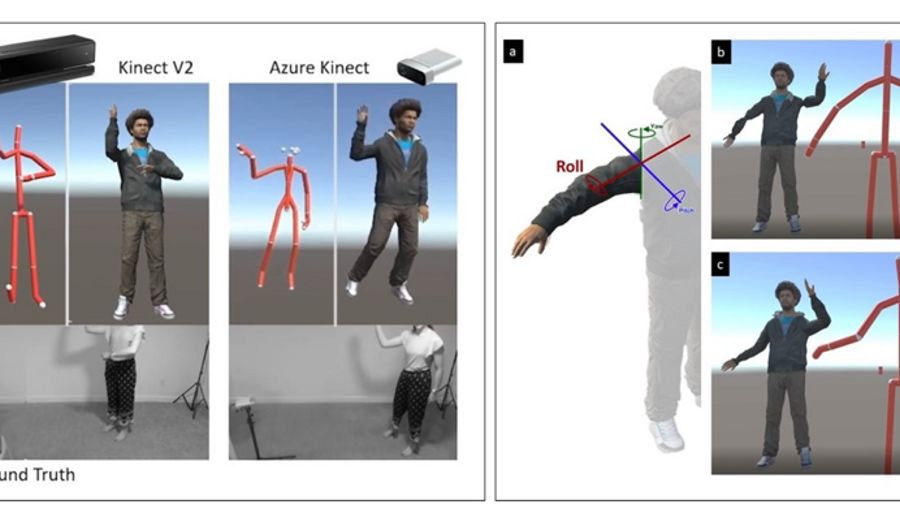

This paper presented an open-source toolbox for animating realistic virtual avatars (the Microsoft RocketBox library) using Microsoft Kinect 2 or Azure Kinect. This tool was implemented for Unity, and allowed users to see the results of their motion capture being animated on an avatar in real time. Furthermore, users are able to save the resulting motion capture as an animation asset and reuse that motion in their own Unity projects. This is especially beneficial for AR/VR projects where the developers are not animation experts, but still wish to use realistic motion in their projects.

Authors

Mar Gonzalez-Franco, Zelia Egan, Matthew Peachey, Angus Antley, Tanmay Randhavane, Payod Panda, Yaying Zhang, Cheng Yao Wang, Derek F. Reilly, Tabitha C Peck, Andrea Stevenson Won, Anthony Steed and Eyal Ofek.

Abstract

This paper presents MoveBox an open sourced toolbox for animating motion captured (MoCap) movements onto the Microsoft Rocketbox library of avatars. Motion capture is performed using a single depth sensor, such as Azure Kinect or Windows Kinect V2. Motion capture is performed in real-time using a single depth sensor, such as Azure Kinect or Windows Kinect V2, or extracted from existing RGB videos offline leveraging deep-learning computer vision techniques. Our toolbox enables real-time animation of the user’s avatar by converting the transformations between systems that have different joints and hierarchies. Additional features of the toolbox include recording, playback and looping animations, as well as basic audio lip sync, blinking and resizing of avatars as well as finger and hand animations. Our main contribution is both in the creation of this open source tool as well as the validation on different devices and discussion of MoveBox’s capabilities by end users.